How Vision Systems Work in Robotics

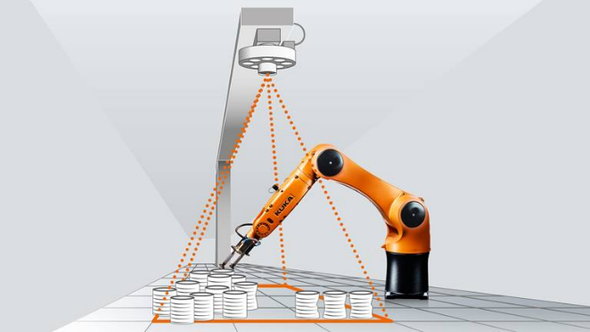

Machine vision systems enable robots to identify objects, whether for lifting or inspecting.

April 14, 2021

Robot vision systems are commonly referred to as machine vision. This vision tool is used in several industrial processes, including material inspection, object recognition, and pattern recognition. Each industry applies its particular values to machine vision. In healthcare, pattern recognition is critical. In electronics production component inspection is important. In banking, the recognition of signatures, optical characters, and currency matter.

Robotic visions systems can include:

1D technologies measure the height of an object moving along a conveyor or read barcodes.

2D scanners can read 1D barcodes, but they can also read 2D barcodes and read 1D barcodes that are dented or crinkled. These are similar to our everyday camera. They can recognize shapes.

3D technology can take distinguish more exact measurements. They can perceive depth as well as shapes.

Structured light sensors project light onto a part and analyze the dimensions of the parts by reading the light.

Time-of-flight cameras. These cameras use infrared light to determine depth information. The sensor emits a light signal, which hits the subject and returns to the sensor. The time it takes to bounce back is then measured and provides depth-mapping capabilities.

We talked with machine vision company, Cognex to get an understanding of how vision systems work with robotics. “There are two main applications for robot guidance. You take an image of the scene and you find something and input that thing’s coordinates. This is the angle for 2D and 3D systems. The robot can see an object and will do something with it,” Brian Benoit, senior manager of product marketing for Cognex, told Design News. “Another application is inspecting an object. A robot holds a camera and the robot moves the camera around the part to get certain images.”

Robots Vary in Style

For Cognex, the vision system often goes to integrators who cobble together automation systems.“Sometimes our customer is an integrator who is building a system with robots that need vision,” said Benoit. “The types of robots we work with are manipulating robots. They may lift a large payload in a cage. Those are fast and unsafe to be around.”

In other cases, the customer is the end-user working with smaller, safer robots, “We also work with collaborative robots that are designed to work side-by-side with people. They’re not going to use as much force,” said Benoit. “They often do pick-and-place.” He noted that the vision systems from Cognex are not the same as the guided systems used by mobile robots. “We haven’t seen much traction with vision on warehouse robots. They use sensors that are integrated into their system, so they don’t need an outside camera.”

While the large caged robots have a decades-long history, collaborative robots are a relatively new addition to the automation world. “The cage robot market is stable. The most common customer is in automotive. The need for a vision system with caged robots isn’t great because there isn’t as much variability,” said Benoit “With collaborative robots, you have a more unstructured environment so there are a lot more vision applications. It’s a developing market, so our strategy is to work well with any robot manufacturer. We develop software interfaces for the robot manufacturer so they can easily work with our system. With each robot, we have to develop an interface.”

Robots See in the Software Brain

The human eye is a sensor. It’s our brain that understands the visual feed from our eyes. Same with robots. The robot’s software interprets the visual data. “Software is the brain behind everything. We have two types of vision. One set of algorithms is rules-based. It looks for patterns and edges. The other algorithm is in the deep-learning space where the software is trained by example. We’re seeing traction in both,” said Benoit. “The goal of the software is a good handshake between the robot and the vision system. We train the robot and the vision system to know where they are together. When the camera sees something, the robot knows where to go in response.”

The vision software includes a plug-and-play connection for each robot maker. “We try to make the integration as simple as possible. We have the right hooks in our software to send signals back and forth,” said Benoit. We also rely on integrators for help on that. We work directly with some robot companies, such as Universal Robots. They have vision software that’s maintained by Cognex and works directly on their robots.”

Part of what sets collaborative robots apart from traditional, caged robots is they are designed to be trained by users rather than programmed by integrators. “Universal Robot’s business model is to skip the integrators. They have a point-and-click driver,” said Benoit. “It’s important that when they work with peripherals, they can just plug it in. They do it with our vision system and with different end effectors.”

Putting the Vision-Enhanced Robot to Work

The vision system that allows the robot to identify a part and pick it up is not different from the vision system that allows the robot to check to do a quality check on a part. “When the robot has a vision system – whether it’s on a conveyor or the end of a robot arm – it works the same. The vision system is mounted on a robot arm, and the arm moves around,” said Benoit. “The software analyzes the feed from the vision. In many cases, the software engages in deep learning. We integrated a system, all of the analysis exists inside the vision platform. We use a neural network inside the camera.”

The software for the vision system may be programmed to identify a specific object, or it may be designed to learn about what it’s seeing. “The deep-earning is different from conventional programming. It’s like teaching a child what a house is. You don’t normally tell the child the coordinates of a house. You say. ‘That’s a house, that’s a house, and that’s an office building.’ Our software is designed to do that in manufacturing.”

Rob Spiegel has covered manufacturing for 19 years, 17 of them for Design News. Other topics he has covered include automation, supply chain technology, alternative energy, and cybersecurity. For 10 years, he was the owner and publisher of the food magazine Chile Pepper.

About the Author(s)

You May Also Like